Measure it till you make it

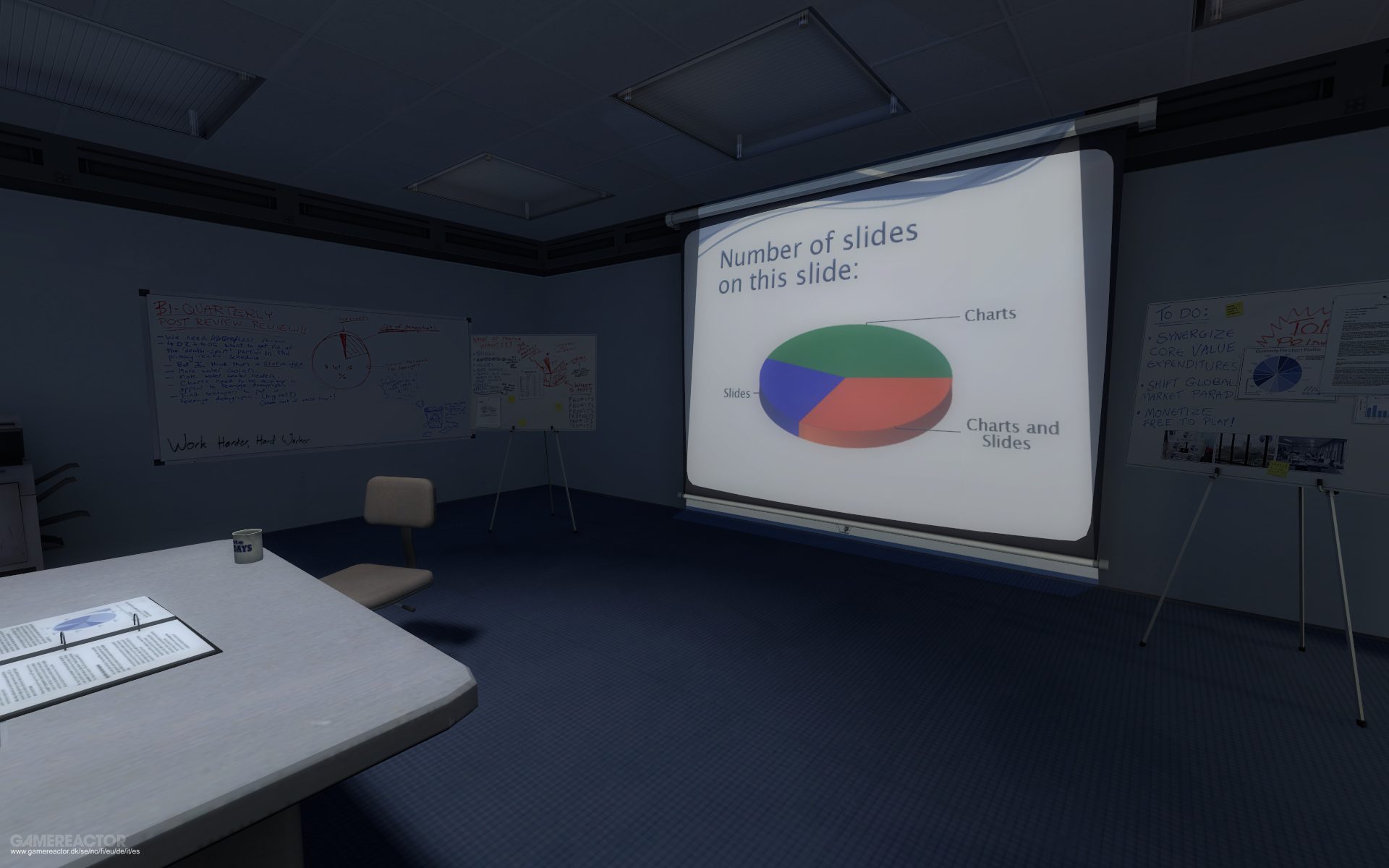

There’s a string of questions that haunt every technical writer and documentation manager at some point in their careers: How do we know that we’ve done a good job? Have we been successful given our limited resources? How can we get better at what we do? Are the docs nailing it? How can we measure value? What do we tell upper management? More importantly, will we know what we’re saying when presenting those figures in slides? And, can you point me to the nearest emergency exit?

We’ve all been there, and we keep getting there. We get there for a couple reasons, a good and a bad one. The good reason is that our work matters to the organization employing our services – nobody would care to know how it’s going otherwise; the less flattering reason is that resources are not infinite, so we need to justify the cost of producing documentation in delicate times. Unfortunately, we can’t just write docs because it’s the right thing to do: it also has to be visibly beneficial.

Of course you must track performance

Technical writers don’t chuck wood, mine ore, or catch fish. We don’t build cars, nor do we repair toasters. As writers in tech, we belong to the service sector, where performance measurement is, at best, a fluffy affair. When you’re producing and selling words and numbers, understanding your throughput is complicated unless you reach some sort of consensus as to what success looks like, coming up with made-up goalposts, also called key performance indicators, or KPIs. Even then, it keeps being complicated.

Writing is not a manufacturing process. Granted, there are some manufacturing-like elements of the writing process as you get closer to publishing the content—and honestly, making that part of the process as commodity-like as possible has some benefits. But, writers add the most value to the content where the process is complicated and non-linear: towards the beginning, where concepts, notions, and ideas are brought together to become a serial string of words.

—Bob Watson in Why I’m passionate about content metrics (2018)

To make matters thornier, tech writers have often found themselves producing docs in quiet bubbles, shielded like hobbits from success indicators and business metrics. This idyllic status quo can have disastrous effects for our profession (which, let’s not forget, was born out of massively funded war efforts): modern businesses aim at being lean, ruthlessly erasing inefficiencies in a neverending Six Sigma trance. Tech writers, in this sense, need to own their own metrics and performance indicators, or at least join the conversation.

Nobody really nailed it, though. Content strategy, our cousin field, started out of the need of owning the strategic conversation around writing for businesses, and has been similarly struggling with conversations around metrics. In the unforgettable keynote Content & Cash: The Economics of Content, Melissa Rach says that “numbers don’t need to be exact” and advocates for getting rid of fear when talking about returns and costs. This is precisely the same hands-on, no-nonsense mindset promoted by Bob Watson:

For every piece of content you publish, you should be able to articulate the value it adds, how it adds that value, and why it adds that value.

My (not very comforting) take is there isn’t a single way of defining docs success because there is no single way of defining the role of docs in an organization. Defining docs KPIs depends on where docs stand in the organization (this is a content strategy question) and where do you want to go from there. When docs are treated as a cost center or a commodity, metrics are defensive and focus on things like personal performance or output. When docs are strategic, success is defined by the success of docs themselves.

Treat documentation as infrastructure

In knowledge industries, documentation should be viewed as essential infrastructure, as a network that allows all parts of an organization to better understand what they’re doing and how they’re doing it. Docs are like short and long-term memory, without which you can’t have identity. Docs are also what ties together different parts of the same product, such as dev docs and user docs. As Tom Johnson’s described in Part IV of his series on value arguments for tech comm:

Tech writers can play a tremendous role in the information flow in a company — if we see it as part of the value we add. As such, tech writers should not be siloed, introverted groups with their heads down typing away for months creating docs in isolation. We should see ourselves as an interactive group that moves information through many parts of an organization, connecting groups together and making the right parties aware of the information they need.

How do you measure the success of infrastructure? How do you measure, say, the success of roads or pipelines? While I agree that it can be challenging, I don’t share Tom’s view that these aspects are too hard or impossible to measure. Infrastructure performance is defined by how efficient the processes that rely on it become, and by how many obstacles and accidents are avoided by its maintenance. Translating that to the realm of technical documentation, you can already draw some ideas:

- Success or conversion rate of documented processes (including in-product docs)

- Time saved by customer support, development, sales attributable to docs

- Decrease rate of incidents involving internally or externally documented processes

- Numbers of internal conversations quoting or linking to documentation

This might require getting a bit creative with internal systems, and navigating some nasty IT policies. But if the case for this type of KPIs is strong, and you’ve at least a partial idea of how the improvements might look — by doing Fermi estimates, for example — chances are that you’ll be able to get that data. Businesses respond better to showing how keen you are on finding answers than to shrugging and saying docs are immeasurable.

Treat documentation as a product

Want to be even more strategic? The cost of producing documentation should be as vital as the cost of making the product it documents. In other words, documentation should be treated as another product of the business, one that increases knowledge and raises the satisfaction of users. If you need an example of what I’m talking about, think about Stripe docs and Markdoc, and how they’ve benefited Stripe’s reputation and ecosystem. Stripe decided that docs were strategic and treated them like a product.

If docs are a product, why not use the same metrics you’d use to measure product engagement? Check for returning users or customers, for engagement (I’m a big fan of comments in documentation), and for user satisfaction. Ask sales, support, engineering, design, and others how happy they are with the docs. Do they use them? Do they learn thanks to them? Do they sell more? Of course the sample is biased, but you can be sure they know about the product, and know about the pain points. Filter the feedback to remove the curse of knowledge noise, keep the rest.

User research is the other (qualitative) way through which you can get some meaningful insights. Thanks to user tests and user interviews, you can get insights on things previously invisible. Feedback widgets, net promoter scores, and surveys are frequently only taken by the angry, so they only tell half the story. One of the most important benefits of qualitative user research, much like field studies or case studies, is that they can put you on the right track as to what to measure, which brings me to the last item: content strategy.

If the success of the product and its docs are shared, then docs should live in the province of product, grazing alongside UI elements. Products and docs should link to each other as much as possible (that includes CLI output, yes). That’s what hyperlinks were invented for. Keeping docs in remote, hard to find, hard to browse support kennels helps no one, and it certainly doesn’t facilitate measurement.

Measuring requires moving what you want to measure somewhere visible.

Don’t measure for the sake of measuring

There is a situation where measuring performance can be worse than the alternative, which is not measuring anything at all, and that is measuring the wrong things. The worst you can do when measuring docs performance is either using a second hand set of metrics, passed down from a parent department or team, or using metrics without giving it some thought. In both cases, you end up basing decisions on irrelevant data. This is not to say that engineering or marketing metrics are not good, but make it your call first.

In the field of software documentation, metrics that don’t really mean anything in the context of technical writing are also the bane of engineers’ existence. Lines of code (or docs), for example, are almost universally shunned. A similar thing happens with story points in Scrum. In both cases, the problem does not lie in the metric itself, but in its mindless usage: Just because you can measure it, it doesn’t mean that you should. All the best performance indicators require thinking hard about your situation.

Let me add a Step 0 to Bob Watson’s excellent list of actions:

0: Think about it and do some estimates

1: Measure it

2: Promote it

3: Repeat it